Morristown Emergency Medicine Residency Program Case Study

See how emergency medicine residents used DDx to prepare for their oral board exams — and what the data showed.

Overview

Evaluating DDx as a supplemental tool for board preparation

Morristown Medical Center's Emergency Medicine Residency Program evaluated DDx, an AI-driven case learning platform by Sketchy, as a supplemental tool for oral board preparation. Over 13 weeks, 30 EM residents (PGY-1 to PGY-3) completed assigned DDx cases alongside traditional oral board sessions. Pre- and post-intervention surveys assessed engagement, confidence, comfort, cognitive load, usability, and study habits — providing one of the most structured evaluations of DDx in a residency setting to date.

Overview

The challenge

Traditional board prep is inconsistent, resource-intensive, and hard to scale

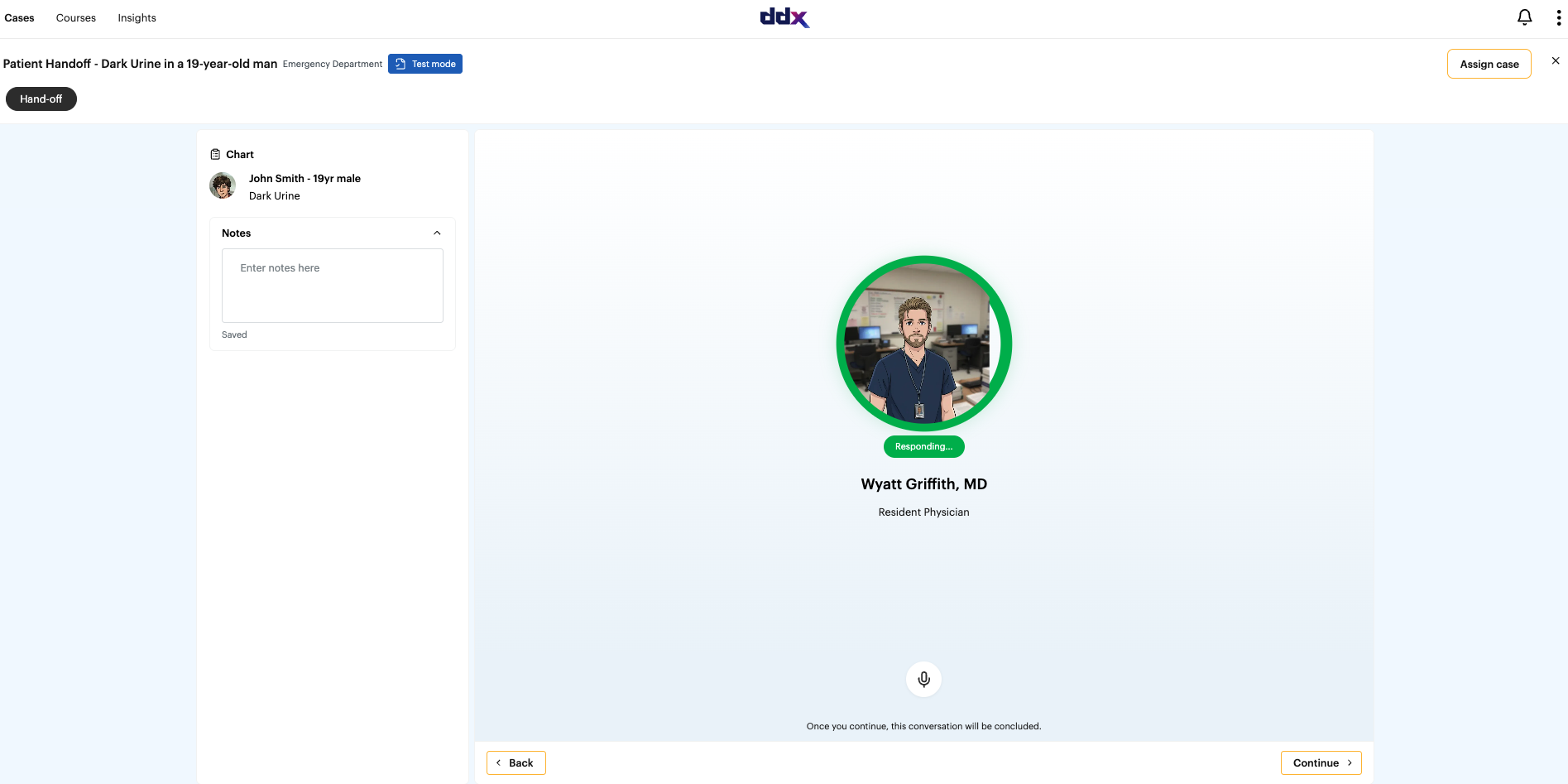

The residency program sought a scalable case-based learning solution that would allow emergency medicine residents to practice clinical reasoning and diagnostic decision-making through realistic patient encounters while supplementing traditional oral board preparation.

Emergency medicine oral board exams require residents to think out loud, manage uncertainty, and demonstrate systematic reasoning under scrutiny. Traditional preparation — case books, study partners, self-directed review — is inconsistent, time-consuming, and rarely simulates the real exam experience well. Traditional oral board preparation also requires multiple faculty examiners and, in many programs, access to simulation labs — making it resource-intensive to deliver at scale. Program directors needed a scalable, accessible solution that could supplement in-person sessions while consistently building structured clinical reasoning.

The solution

Why DDx by Sketchy?

DDx provided important accessibility and resource savings compared to traditional oral board preparation. By enabling residents to practice asynchronously and at scale, DDx reduced reliance on faculty time and specialized facilities — ensuring more consistent preparation opportunities and offering meaningful scheduling advantages for the residency program. Stress levels were notably lower with DDx compared to traditional oral board review sessions.

How did DDx by Sketchy fit?

Assigned 2 DDx cases per week for 13 weeks (26 total cases assigned); Residents completed cases asynchronously alongside 3 in-person oral board sessions; Pre- and post-surveys measured engagement, confidence, comfort, cognitive load, and usability; performance was analyzed by case completion volume and residency year to identify dose-response effects.

Cases Included:

- Residency-level, EM-focused DDx cases across 13-week period

The results

Confidence gains, strong engagement, and a clear dose-response effect

Testimonials

What did faculty say?

AI-enabled case practice as a scalable complement to board prep

The Morristown pilot provided meaningful evidence for DDx's role as a scalable and accessible complement to traditional oral board preparation. While DDx reinforces clinical reasoning effectively, the study also identified opportunities to further enhance oral board readiness — including the development of residency-level cases designed to incorporate higher cognitive load, time pressure, and examiner-style formatting. Those cases are now in development, and tailoring DDx content to this level of training is expected to yield even greater improvements in both clinical reasoning and oral board preparation outcomes.

Contents