Every accredited Nurse Practitioner program is held to the same outcome standard, but not the same assessment infrastructure.

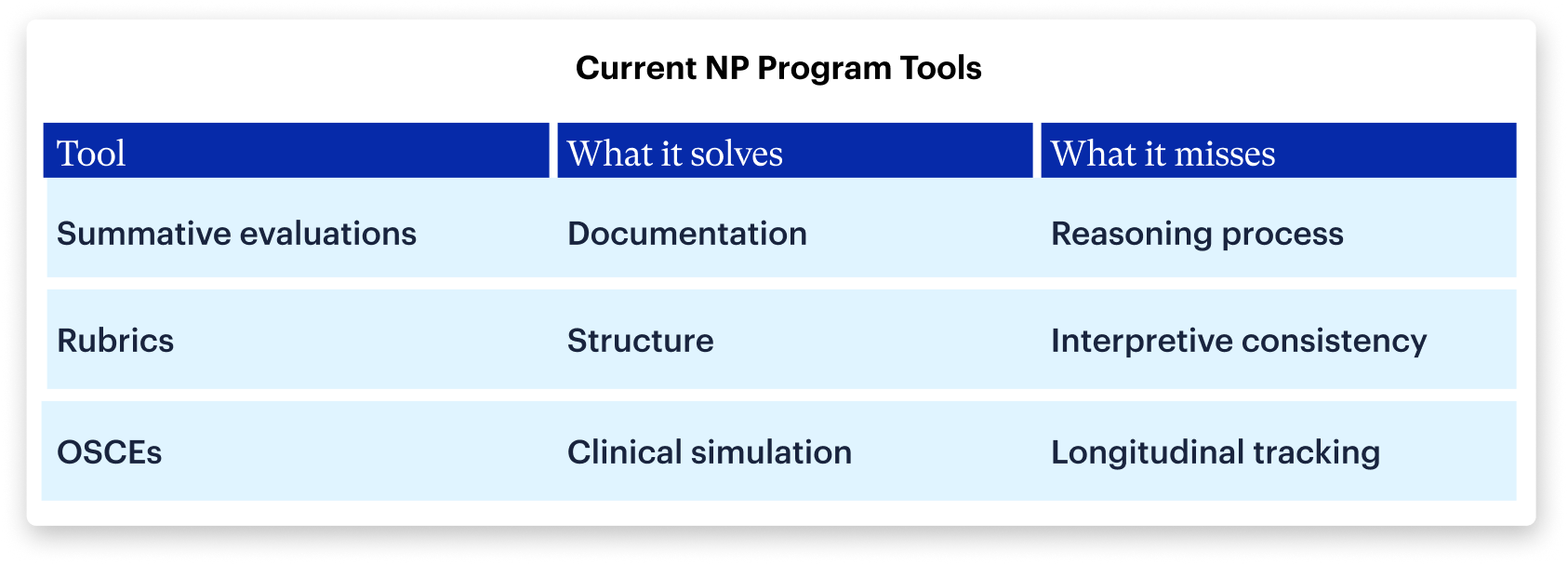

Organizations like CCNE and ACEN define what graduates must be able to do before entering practice. What they don't define is how programs should evaluate the thinking that leads to those decisions. A 2022 scoping review of U.S. primary care NP programs found "a lack of shared conceptualizations of diagnostic reasoning" across the field meaning most programs rely on tools that measure what students produce rather than how they think.

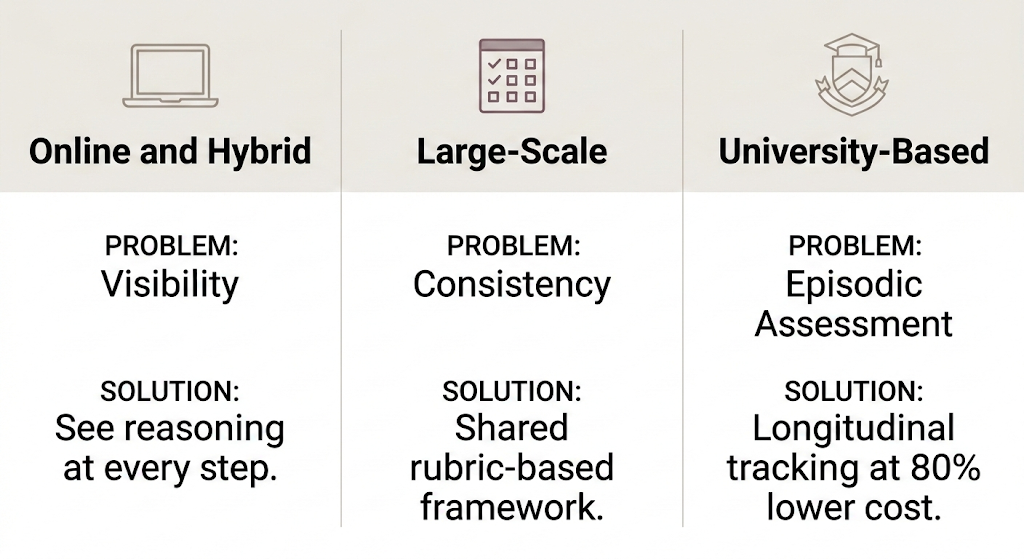

That gap looks different depending on how a program is structured:

- Online and hybrid programs struggle with visibility — faculty rarely observe reasoning in real time

- Large-scale programs struggle with consistency — shared evaluation standards break down across dozens of sites and preceptors

- Smaller cohort programs face recognition challenges — from securing clinical placements to attracting the resources students need to succeed

Across all three, the underlying challenge is the same. What differs is not faculty intent or quality — it's what each program structure actually allows faculty to see.

Online and hybrid NP programs: The clinical reasoning visibility problem

Online and hybrid NP programs face a structural visibility problem: faculty rarely directly observe students during clinical encounters, and competency evaluation depends heavily on information reported after the fact — typically through online skills submissions or onsite clinical intensives and workshops. Because direct observation is limited to these structured touchpoints, what faculty see is a snapshot, not the full picture of how a student thinks in practice.

Challenges:

- Clinical reasoning is inferred rather than directly observed

- It becomes difficult to distinguish mastery over memorization

- Faculty may struggle to distinguish thinking errors from limited clinical exposure and addressing either requires time programs can rarely spare.

When reasoning gaps go undetected early, they often surface later during higher-stakes evaluations, when remediation becomes more disruptive for both students and programs.

Large-scale NP programs: The consistency problem in clinical reasoning assessment

Large-enrollment programs face a different structural challenge: when hundreds of students are placed across dozens of clinical sites, maintaining a shared understanding of what strong clinical reasoning looks like becomes a coordination problem that grows with every new cohort.

Challenges:

- Interpretive variance: Different preceptors may define “competent reasoning” differently.

- Limited preceptor capacity: With constrained preceptor resources, programs often lack consistent visibility into how clinical standards are applied across placements.

- Documentation risk: Subjective evaluations can be difficult to standardize, audit, and defend under regulatory scrutiny.

Even well-designed programs struggle to ensure that reasoning standards are applied consistently across sites. The result is a difficult reality for program directors: they remain accountable for outcomes they cannot fully observe or verify.

Small cohort NP programs: The recognition problem

Programs with smaller cohorts often foster close faculty relationships and supportive learning environments. But beneath that intimacy lies a structural challenge: limited program recognition creates compounding disadvantages that even the most dedicated faculty can't fully offset.

When program recognition shapes program capacity:

- Less visibility makes securing quality clinical placements harder to compete for

- Limited resources mean students navigate a fragmented mix of tools without unified support

- Faculty are stretched thin, filling gaps that better-resourced programs solve through infrastructure

As a result, student success becomes dependent on individual effort rather than systemic support.

The shared challenge across NP programs

Despite these structural differences, NP programs are ultimately trying to solve the same problem: How do you track an internal, evolving cognitive process using external, static tools?

But none of these tools simultaneously provide:

- Direct visibility into clinical reasoning

- Consistent evaluation across sites

- Longitudinal tracking across training

What NP education lacks is shared infrastructure built to do two things: identify gaps in clinical reasoning early, and trigger remediation before those gaps follow a student into the clinical, all without piling more onto already-stretched faculty.

To bridge the education-to-practice gap, programs need tools that make reasoning:

- Observable

- Standardized

- Longitudinal

— regardless of clinical site, preceptor variability, or curriculum delivery model.

DDx by Sketchy: A new standard for clinical reasoning assessment in NP programs

DDx by Sketchy recreates the full arc of an NP patient encounter, from initial assessment and differential diagnosis through diagnostics, management decisions, and patient education. Built by clinicians, it gives faculty something they've rarely had: direct visibility into how students think.

Rather than evaluating the artifacts of reasoning, with clinical realism DDx enables learners to experience the full arc of a NP student encounter. Students move step-by-step through a clinical case, from:

- Initial patient presentation

- History and physical exam findings

- Differential diagnosis generation

- Diagnostic testing decisions

- Treatment and management planning

- Patient education

At each stage, the platform captures how the student is thinking, not just the final answer.

“[The students] are learning so much that even when the cases are challenging, they feel like they walk away with a better understanding of how to care for that patient.”

- Bryanna Scherer, MSN, APRN, AGNP-C, OCN

Assistant Professor of Nursing at Missouri Baptist University

Faculty can see:

- Which diagnoses students initially consider

- How they prioritize and narrow their differential

- Which clinical findings influence their reasoning

- Where incorrect assumptions or premature closure occur

This provides something NP educators have historically lacked: direct visibility into clinical reasoning development.

88% of students at Hawaii Pacific University Psychiatric Mental Health Nurse Practitioner Program said they felt more prepared for OSCEs and clinical check ins.

For online and hybrid programs

DDx provides direct visibility into student reasoning by showing:

- How learners construct and narrow a differential diagnosis

- Where they hesitate or reconsider

- Which diagnoses they prematurely rule out

This allows faculty to identify reasoning gaps early enough to intervene.

For large-scale programs

DDx introduces a shared, rubric-based framework applied consistently across learners, regardless of clinical site or preceptor.

Program leaders can:

- Monitor individual and cohort-level reasoning trends

- Generate defensible assessment data

- Maintain consistent evaluation standards across distributed sites

For smaller cohort programs

DDx directly addresses the structural barriers smaller programs face — giving programs the infrastructure and resource efficiency that were previously out of reach.

With DDx, programs can:

- Build a rigorous, trackable record of student reasoning development across the full curriculum

- Deploy cases at up to 80% lower cost than standardized patient OSCE equivalents, removing the resource barrier without sacrificing quality

- Cover all NP specialties (primary, acute care, psychiatric, geriatric, and pediatric) — reducing dependence on hard-to-secure clinical placements for foundational reasoning practice

Smaller programs can deliver the same depth of clinical reasoning assessment as larger institutions, without the overhead.

Designed to int egrate seamlessly across the NP curriculum

DDx cases can be scaffolded across all NP specialty tracks and training stages, including:

- Initial implementation in the 3 Ps (Pharmacology, Pathophysiology, Adv. Health Assessment)

- Continued implementation modularly in didactic courses and clinical practicums

Cases increase in complexity as students advance, helping programs align reasoning assessment with the progression of clinical training.

This creates a continuous thread of reasoning evaluation from didactic learning through advanced clinical practice.

The bottom line…

All programs are accountable for one important metric: competent graduates with effective medical knowledge, who can think clearly, justify their decisions, and practice safely from day one. Assessment of Clinical reasoning cannot remain inferred, inconsistent, or episodic. It must be visible, shared, and tracked across the full continuum of training.

Across NP pilots at Fresno State, University of Cincinnati, and Hawaii Pacific University, 85–95% of students said they would recommend DDx to peers or wanted it continued or expanded in their program.

Stop inferring what your students are thinking. Discover how DDx by Sketchy can make clinical reasoning assessment visible, consistent, and trackable across your NP program.