Traditional didactic tools often struggle to meet learners' needs for hands-on practice/real-world practice/etc. To help meet this need, faculty have begun leveraging generative AI to build clinical cases and provide their learners with more ways to practice applying their clinical knowledge. Survey data shows 54% of medical faculty use ChatGPT for clinical vignette construction — the single most common use case reported — and an estimated 33% have integrated it into their regular workflows (Cross et al., Cureus, 2023).

The problem is what comes after. 74% of those same faculty cited incorporation of misinformation into teaching materials as their top concern (Cross et al., Cureus, 2023). Also, when the case arrives without rubric-based assessment, expert review, or structured feedback, learners work through a scenario without any of the scaffolding that makes it educationally useful.

Time is limited, and educators want to create cases on-demand, without sacrificing the feedback that makes those cases worth doing. The gap between a generated scenario and a complete teaching tool is real, and it is closable.

What research reveals about AI-generated clinical cases in medical education

A 2025 study in BMC Medical Education found clinical inaccuracies in nearly 1 in 6 LLM-generated cases, including a case that recommended a diagnostic test not used in standard clinical practice for that condition (Zhang et al., 2025).

Beyond accuracy, the parts of the case designed to actually assess learners — the questions, the differential diagnosis, the examination findings — scored lowest across every faculty evaluation. In the Zhang et al. rubric, these were the three weakest dimensions of LLM-generated content:

- MCQ relevance and appropriate difficulty

- Comprehensiveness of differential diagnoses

- Diagnostic utility of examination descriptions

A qualitative study from Rutgers found that none of the participating faculty could get ChatGPT to produce cases with the clinical complexity needed for meaningful learner assessment — cases that could meet the specific learning objectives faculty had defined around chief concern, differential diagnosis, history, physical exam, and overall case coherence. Reviewer comments included "objectives largely not met" and "does not meet the stated objectives with any depth" (Wong et al., Cureus, 2024).

What a complete clinical case requires for competency-based assessment

What turns a clinical case simulation into a teaching tool is the structure built around it: expert review, rubric-based scoring, and the performance data that follows.

Clinical accuracy and expert review

Before a case reaches a learner, it requires subject matter expert (SME) review for diagnostic logic, clinical plausibility, and alignment with the learning objectives. As the research shows, inaccuracies in AI-generated cases are often subtle enough to go undetected without clinical expertise.

Rubric-based assessment tied to accredited competencies

A complete case measures specific competencies rather than general performance.

- Clinical reasoning assessment should cover the full diagnostic process on how learners gather and interpret information to guide directed testing and management plans.

- Communication assessment should evaluate how learners convey clinical information, demonstrate empathy, and collaborate with patients and teams.

Performance data that enables remediation

Faculty need tools that are efficient for them, but also meaningful for learners. Which competency dimensions are holding across the cohort, and which aren’t? Where do learners need targeted support before gaps compound? That is the data that makes early remediation possible.

Built by medical professionals, DDx is the trusted, AI-enabled clinical readiness platform for realistic, engaging, and consistent case simulation across every competency and phase of clinical education.

How purpose-built case generation closes the accuracy and assessment gap

Most programs can find cases that are close to what they need. Getting to cases that are exactly right, matched to a specific specialty, setting, and learner level, takes time that is rarely available. That is the gap custom case generation is designed to close.

DDx's Custom Case Generation gives educators a direct path from what they want to teach to how learners actually work through.

For programs with more specific needs, a particular specialty focus, a niche clinical context, or outcomes that require cases built from scratch, DDx partners directly with faculty to develop custom cases aligned to their course outcomes.

- Expert review: Every DDx case is reviewed by subject matter experts before it reaches a learner, so the accuracy gap that affects roughly 1 in 6 AI-generated cases does not reach your curriculum.

- Rubric-based assessment: DDx builds clinical reasoning or communication rubrics into every case, mapped to ACGME, AACOM, AAMC, INACSL, NONPF, and ARC-PA, so assessment is consistent, competency-aligned, and defensible.

- Performance data: DDx surfaces cohort-level insights faculty can act on, giving programs the visibility to identify struggling learners and intervene early rather than after gaps have compounded.

The distinction that matters

Using a general LLM to generate a scenario gives you a case.

Using a platform built for clinical education gives you a case with structured insights and assessment across every competency dimension, SME review before it reaches a learner, and the performance data to understand what your cohort actually needs.

Generating the scenario is the starting point. Understanding how your learners interacted with the encounter tells you about their readiness.

If your program is working around case gaps or building the review layer manually, see how DDx supports custom case development with a free trial here.

Frequently asked questions

Can general AI tools like ChatGPT generate clinical cases for medical education? Yes, and many faculty are already using them. The limitation is not generation, it is what comes after. AI-generated cases require expert review for clinical accuracy and do not include rubric-based assessment or competency-aligned feedback without additional development work.

What are the risks of using LLM-generated cases without expert review? Research has documented AI hallucinations in roughly 1 in 6 LLM-generated clinical cases, including clinically inaccurate diagnostic recommendations. Faculty who use general AI tools still bear responsibility for accuracy review, competency alignment, and assessment design, work that adds time rather than saves it.

What is the difference between a clinical case and a clinical teaching tool? A clinical case presents a scenario. A clinical teaching tool includes structured assessment tied to specific competencies, rubric-based feedback learners can act on, and performance data faculty can use for remediation. The assessment layer is what makes a scenario educationally useful.

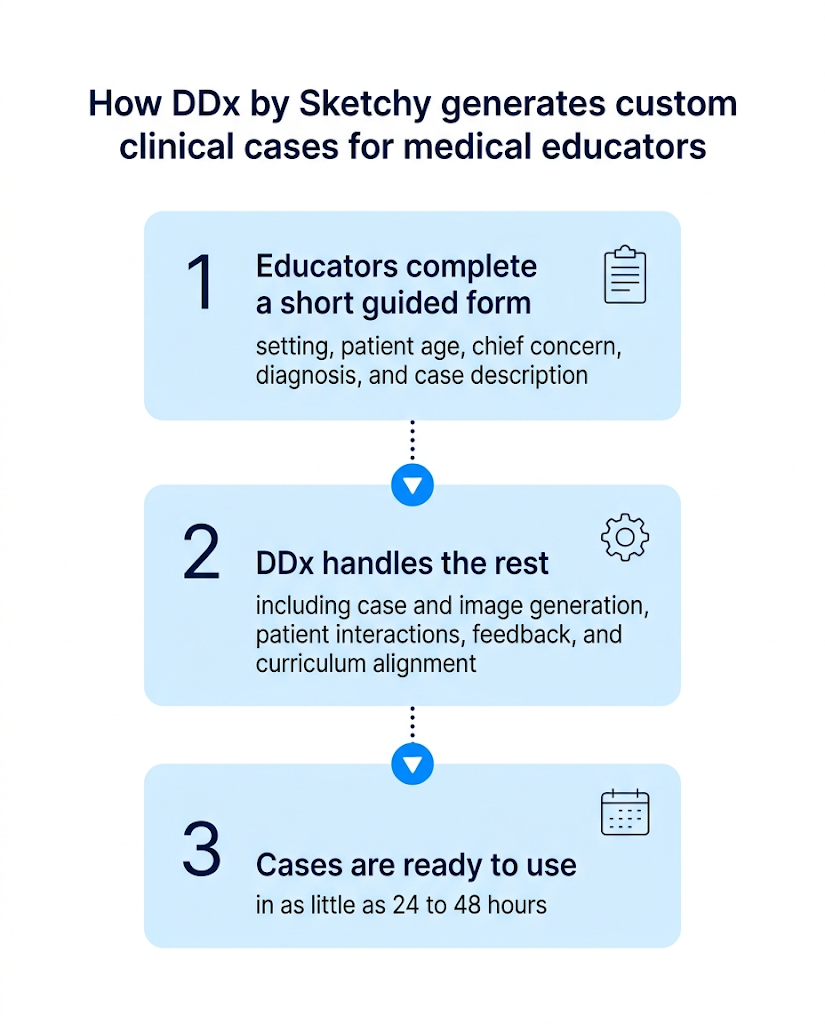

How does DDx handle custom case generation? Educators complete a short form specifying setting, patient age, chief concern, diagnosis, and case description. DDx generates the full case, including patient interactions, images, feedback, and curriculum alignment, automatically. Cases are typically ready within 24 to 48 hours. For more specialized needs, DDx works with faculty directly to develop cases tailored to specific course outcomes.

How do DDx rubrics align with accreditation standards? DDx's rubric dimensions are mapped to national clinical competency standards across ACGME, AACOM, AAMC, INACSL, NONPF, and ARC-PA. The 1-to-3 performance scale reflects developmental stages, preliminary, progressing, and proficient, that align with how programs already track learner progress.

References

Zhang Q, Huang Z, Huang Y, Wang G, Zhang R, Yang J, Cheng Y, Chen B, Wang H, Qiu K, Chen H. Generative AI in medical education: feasibility and educational value of LLM-generated clinical cases with MCQs. BMC Med Educ. 2025 Oct 27;25(1):1502. doi: 10.1186/s12909-025-08085-8. PMID: 41146115; PMCID: PMC12560302.

Wong K, Fayngersh A, Traba C, Cennimo D, Kothari N, Chen S. Using ChatGPT in the Development of Clinical Reasoning Cases: A Qualitative Study. Cureus. 2024 May 31;16(5):e61438. doi: 10.7759/cureus.61438. PMID: 38953081; PMCID: PMC11215020.

Cross J, Robinson R, Devaraju S, Vaughans A, Hood R, Kayalackakom T, Honnavar P, Naik S, Sebastian R. Transforming Medical Education: Assessing the Integration of ChatGPT Into Faculty Workflows at a Caribbean Medical School. Cureus. 2023 Jul 5;15(7):e41399. doi: 10.7759/cureus.41399. PMID: 37426402; PMCID: PMC10328790.